Lydia Nishimwe

AI Research Scientist | PhD

Biography

AI Research Scientist working on large-scale Transformer models, with a focus on how they behave under distribution shift and how to make them more robust to real-world data.

I completed my PhD at Inria Paris and Sorbonne Université, where I studied the interaction between data, scale, and model behaviour in neural systems, from representation learning to generation.

My work sits at the intersection of model design, evaluation, and real-world reliability, with a particular interest in understanding and improving how AI models generalise beyond clean benchmarks.

Beyond research, I enjoy writing, public speaking, and exploring languages and cultures.

Currently exploring research scientist and applied AI roles.

Nishimwe (/niːʃiːmŋé/) is a Rwandan name meaning ‘Thanks be to God.'

Fun fact: there is another Lydia Nishimwe who is a singer—we are not related.

- LLMs & Foundation Models

- Representation Learning

- Robustness, Evaluation & Distribution Shift

- Multimodality & Multilinguality

-

PhD in Computer Science, 2021-2025

Inria Paris, Sorbonne Université

-

MEng in Mathematics and Computer Science, 2017-2021

École Centrale de Nantes

-

BSc in Mathematics and Computer Science, 2014-2017

Université Grenoble Alpes

Languages

Native

Native

Advanced

Intermediate

Intermediate

Elementary

Experience

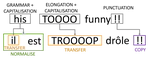

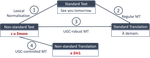

Topic: Robust Neural Machine Translation of User-Generated Content

Supervised by Benoît SAGOT and Rachel BAWDEN, defended on June 18, 2025

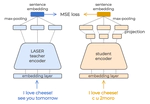

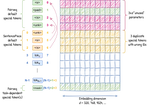

- Designed and trained large-scale Transformer models (100M–1.3B parameters) for multilingual representation learning and generation.

- Studied how performance evolves under data scale, noise, and distribution shifts, using datasets from thousands to hundreds of millions of samples.

- Developed robust representation learning approaches, aligning noisy and standard inputs to improve generalisation.

- Explored trade-offs between normalisation, robustness, and semantic preservation using BERT-style models and LLMs.

- Fine-tuned multilingual models on real-world data, demonstrating robustness gains without sacrificing standard performance.

- Analysed scaling effects, showing that larger models reduce sensitivity to surface noise but introduce new distribution-related failure modes.

- Framed robustness as learning across heterogeneous data distributions, with implications for real-world and multimodal AI systems.

Tech stack: Python, PyTorch (Fairseq, Hugging Face Transformers), Pandas, Scikit-Learn, SLURM

- Studied sequence generation strategies, analysing trade-offs between quality, latency, and search complexity.

- Designed controlled experiments to isolate decoding behaviour from model architecture.

- Identified key failure modes in neural generation, shaping an interest in evaluation and reliability of generative models.

Tech stack: Python, TensorFlow, Keras, Pandas, Scikit-Learn

- Integrated an external LMS (Opencast) into a production platform, focusing on API reliability and data flow across services.

- Worked on backend systems in Erlang, gaining early exposure to distributed system interactions.

Tech stack: Erlang, HTTP

Topic: Functional verification of an ARM7 microprocessor

- Verified and debugged an ARM7 microprocessor using VHDL simulations and assembly-level test programs.

- Investigated execution behaviour (pipeline hazards, instruction decoding), developing an early focus on system correctness and reliability.

Tech stack: VHDL, C, ARM Assembly, ModelSim

🏆Stage d’excellence (Excellence Internship Program) - Université Grenoble Alpes🏆

- Modelled and simulated GPS trajectories using synchronous functional languages (Lustre, Lutin).

- Worked on temporal system behaviour, introducing a formal and structured approach to modelling and reasoning.

Tech stack: Lutin, Lustre

🏆Stage d’excellence (Excellence Internship Program) - Université Grenoble Alpes🏆